Visualization Devices

The Graphics & Visualization Lab, located at NASA’s Glenn Research Center in Ohio, uses advanced technology to design and develop software applications for various audiences. The team aims to inform children, adolescents and adults about advanced technology used to support employees at Glenn.

The GVIS Lab uses Virtual Reality (VR) and Augmented Reality (AR). These technologies encapsulate unique settings while creating immersive experiences. Not only are these devices entertaining, but research indicates VR and AR help students who have difficulties learning general material in school. Students have a better chance of learning STEM material at a faster rate if they use VR or AR during instructional hours. There’s no doubt advanced digital devices can transform peoples lives–it did for NASA’s astronauts.

At one point in time, astronauts were fairly new to advanced technology like VR and AR to aid in space exploration. Today, NASA programmers use actual footage and data from space to create high-quality VR and AR visualizations which astronauts are able to use to help ensure more accuracy with their training.

VR and AR are creating unforgettable experiences for many people, which is one of the reasons the GVIS Lab chooses to use these devices during outreach. These devices help explore places on which no human has ever stepped foot!

GVIS Lab Technologies

What is Scientific Visualization?

Collected data can be difficult to understand without a visual representation. However, to make it easier, numbers that are collected can be represented through graphics or visualizations. This allows a group of people to gain insight on proposals, medical producers, research and studies that would be difficult to understand without graphics.

What is VR and AR?

What is Virtual Reality (VR)? VR describes 3D models that are encased in a virtual world or environment that can be interacted with in a seemingly real or physical way by a person using special equipment.

Example: Google Arts and Culture, Virtual Reality Surgical Training,

What is Augmented Reality (AR)? AR is technology that superimposes a computer-generated image on a user’s view of the real world, thus providing a composite view.

Examples: Snapchat Filters, Pokémon Go, Sephora Virtual Artist, Mission ISS

Why is Glenn using VR and AR for outreach? VR and AR teach the community about Glenn’s mission at NASA.

What is Natural User Interface (NUI)?

In the past, users had to accommodate to technology, but today NUI has eliminated some of those limitations by allowing users to use intuitive behaviors to maneuver and manipulate devices. To understand NUI with more context think of your day to day physical behaviors. More specifically the movement of your fingers when you’re picking up an object, those movements are intuitive. Now, transfer that same idea to applications used on computers or handheld devices such as a touch screen phone or tablet. The movement of your fingers allow you to manipulate any application with basic motor skills making the usage of advance technology a lot easier and creating a Natural User Interface.

Simpler Explanation: Interaction of basic motor skills with advance technology

Examples: Touch screen phones, tablets, cameras, Siri (Virtual Assistance)

Applications and Devices used during Outreach

Hand Tracking

This is a hand tracking device that is well known for its user-friendly software and hardware. The device is rectangular in shape, and it can fit nicely in the hand of an average adult. It has multiple camera sensors that expertly track the hands of a human.

How do the sensors detect movement?

This device has three infrared cameras that detect an individual’s hands. The camera identifies key points of the hand, such as joints and knuckles, to construct a virtual replica of the user’s hand

How does the GVIS Lab use the device?

The GVIS Lab uses this device in multiple ways. One of the ways this small USB peripheral device is used is by first creating an app and mounting the hand tracking device onto a VR headset. In conjunction, users can manipulate objects onboard the ISS, Pepper’s Ghost display or any other applications that can works well with the hardware and device.

VR Headset

This is a virtual reality (VR) headset that comes with two hand controllers. Users are able to view 3D models and feel completely immersed in a virtual environment. The user can move their head from side to side and look at their virtual area.

How does the GVIS Lab use the device in outreach events?

The GVIS Lab has several VR demonstrations for the headset, including an exploration of the International Space Station, a journey to follow an unmanned aircraft, and the Apollo 11 mission to the Moon accompanied by Neil Armstrong.

AR Headset

This device is an interpretation of “mixed reality”, also known as augmented reality (AR). It’s embedded with advanced optics, various sensors, and a holographic processor that blends effortlessly to its environment. To make the hardware comfortable to use, there’s an adjustment wheel that moves to the comfort of the user which secures itself nicely on the users head.

This device is an interpretation of “mixed reality”, also known as augmented reality (AR). It’s embedded with advanced optics, various sensors, and a holographic processor that blends effortlessly to its environment. To make the hardware comfortable to use, there’s an adjustment wheel that moves to the comfort of the user which secures itself nicely on the users head.

Why is an AR headset used during outreach?

The device displays holograms and the GVIS Lab uses this technology to show real surface data of Mars from the Curiosity Rover.

Second-generation Augmented Reality Headset

Recently, GVIS acquired the brand-new second-generation augmented reality headset which has a larger field of view, a longer battery life, more processing power, and a better fit around the head than its predecessor. The visualization field is also hinged so that users can enter and exit the augmented reality as they please.

MR Headset

The mixed reality headset uses both hand tracking and a controller for user input. The device is ecosystem based, so it detects spatial computing through built-in sensors while calculating the user’s physical space.

The GVIS Lab is currently developing new applications for the Magic Leap that can be used during NASA tours and outreach demonstrations.

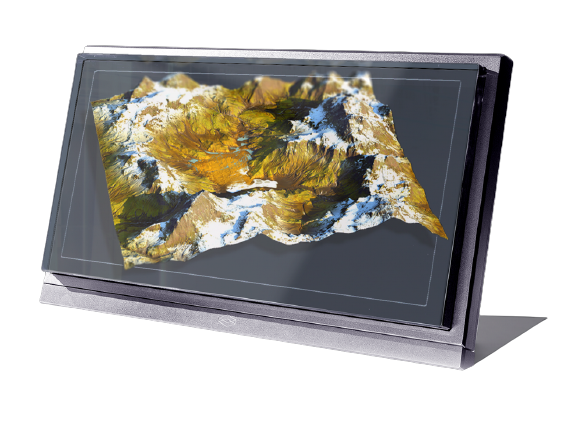

Interactive 3D Display

At first glance, the interactive 3D display looks like a large computer monitor; however, it is much more than that! The visualization system is a device that allows users to view objects in stereoscopic 3D. The glasses used for this device has reflective tabs. These tabs are tracked by the computer allowing it to adjust the display to give the user an immersive experience. By using 3D glasses and a stylus, users can interact with virtual content by pulling content off the screen and seemingly into the real world.

At first glance, the interactive 3D display looks like a large computer monitor; however, it is much more than that! The visualization system is a device that allows users to view objects in stereoscopic 3D. The glasses used for this device has reflective tabs. These tabs are tracked by the computer allowing it to adjust the display to give the user an immersive experience. By using 3D glasses and a stylus, users can interact with virtual content by pulling content off the screen and seemingly into the real world.

Why is the interactive 3D display used during outreach?

The zSpace enables small group activity and interaction with other users within the same physical space. The GVIS Lab is currently making programs for the zSpace which illustrate NASA concept vehicles and interactive demonstrations of aerospace technology.

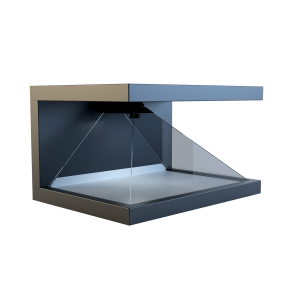

3D Holographic Display

The 3D holographic display is a 1′ by 2′ foot, 3-sided holographic viewing device used to demonstrate displays in an innovative and intriguing way. Featured with built-in audio and high-end glass optics, the holographic display is a game changer in the world of immersive visualization. This display is setup frequently in the GVIS Lab for visiter tours and is also taken to major events for showcasing NASA work in space communications and aeronautics.

The GVIS Team has specifically made adjustments to the 3D holographic display allowing for a connected hand tracking device to manipulate the object in the display when visitors interact with it by moving their hands.

3D Volumetric Display

The 3D volumetric display 8K and 4k visualization devices are a high resolution, holographic display tools used in the GVIS Lab. The 8K model features a 32” display screen and 53º viewing cone while the 4K model has a 15.6” display screen size with a 58º viewing cone. The block less design featured on both devices allows for eyewear-free 3D viewing, greatly increasing the viewing distance and viewing area of the displays. These devices are great for presentations, demonstrations, and collaborating within 3D environments. Within the GVIS Lab, the 3D volumetric display have been used to show the X-57 SCEPTOR Concept Aircraft for example.

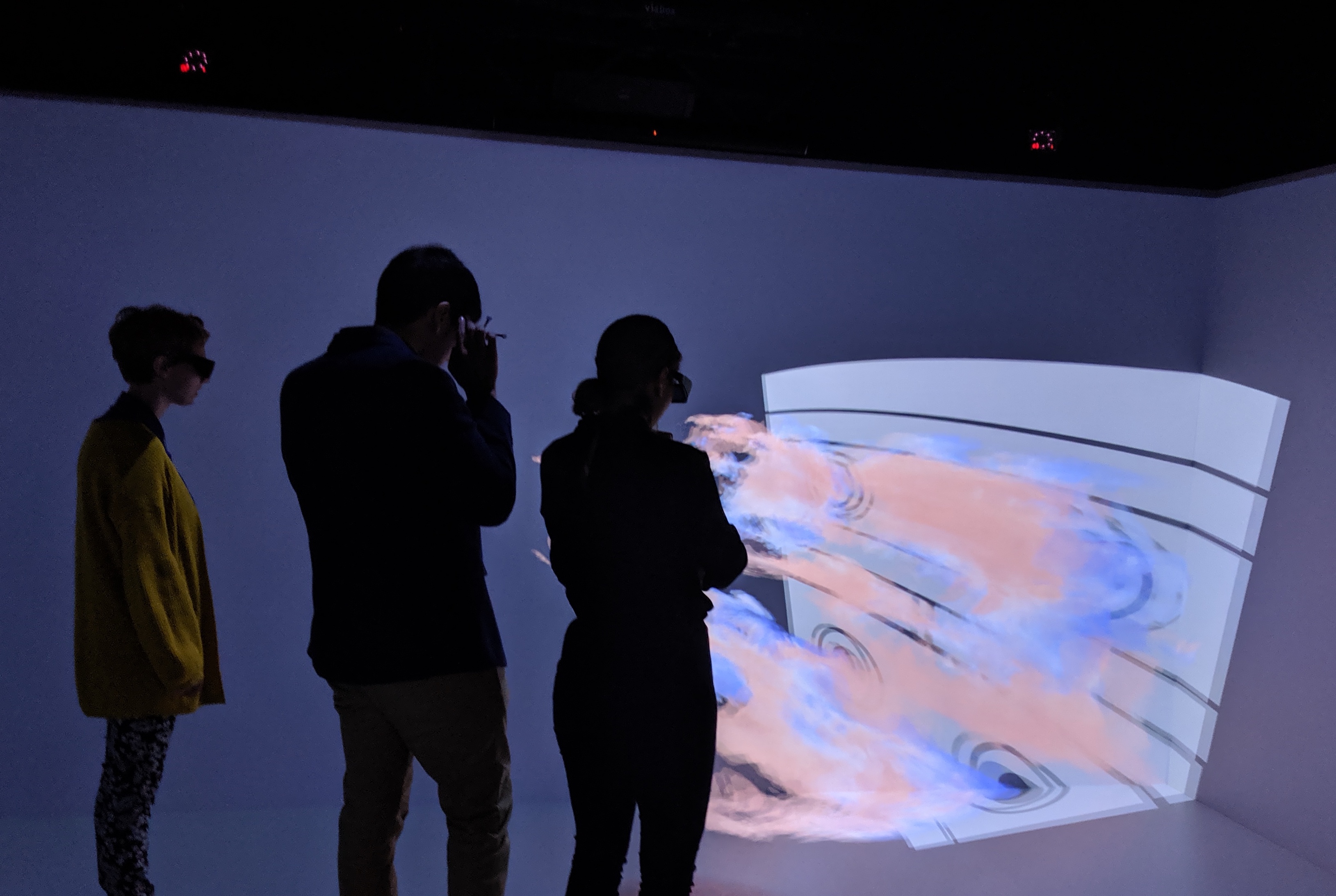

GRUVE Lab/CAVE Automatic Virtual Environment (CAVE)

Within in the last few decades, the GVIS Lab has expressed interest in advanced technology, but more specifically in devices that illustrate virtual, augmented, and mixed reality.

Due to their interest, the GRUVE Lab at Glenn was established by the Intelligent Synthesis Environment Program. The purpose for this lab was to create an interactive display system where NASA’s missions were enhanced through visualizations. The GRUVE Lab is a VR system commonly called the Cave Automatic Virtual Environment (CAVE).

The CAVE is a unique immersive visualization tool that consists of four stereo projectors on which virtual environments and models can be displayed. By displaying a stereo view of a computer generated graphics, one or a group of people can see and interact with 3D models without having to completely isolate the user from the world.

Contrary to traditional VR headsets, the CAVE only requires lightweight glasses for tracking and stereo separation, which can relieve the downside of traditional VR methods. In some cases, users may encounter some motion sickness and tiredness after a short period of time, but the overall experience is worthwhile.

Applications

Virtual Microgravity Science Glovebox in the International Space Station

The GVIS Lab uses an application called the Virtual Microgravity Science Glovebox which introduces the spacecraft through a VR application using the Oculus Rift and Leap Motion. In short, users are able to explore the spacecraft and enjoy 3D models that emulate the ISS.

Why is the ISS used during outreach?

- This application enables users to observe the details on the space station

- It allows users to examine their surroundings while exercising critical thinking skills

- The application encourages user engagement

Contact Us!

Need to reach us? You can directly send an email to the GVIS Team GRC-DL-GVIS@mail.nasa.gov or the Team Leader, Herb Schilling hschilling@nasa.gov

Like our content? Follow us on social media @nasa_gvis on X and Instagram!

(Tech Spec Images and References: Microsoft, Aniwaa.com)